The Token Economy: Tokenmaxxing Is Stupid Until It Isn’t

Meta engineers burned 60 trillion AI tokens in 30 days. Anthropic has compressed product cycles from months to days. Meanwhile, nearly 90% of firms say AI has had no impact on productivity or employment. All three things are true. The difference is not access to AI. It is the operating model around it.

Meta engineers reportedly had an internal leaderboard called Claudeonomics. It is available to all 85,000 employees, and 60 trillion "AI tokens" have been burned in 30 days. The company even created digital badges like "Token Legend" and "Cache Wizard." Some employees are reportedly leaving agents running overnight to climb the rankings.

On a recent podcast, Jensen Huang said something that sounds wild but is far more plausible if you extrapolate current trends out: if a $500,000 engineer isn't burning at least $250,000 in tokens a year, he'd be "deeply alarmed."

At the same time, economists are calling it a paradox.

A recent NBER study of 6,000 executives across the US, UK, Germany and Australia found that nearly 90% of firms said AI has had no impact on employment or productivity over the past three years. Average usage was 1.5 hours a week. Apollo's chief economist Torsten Slok invoked the ghost of Robert Solow: "You can see AI everywhere except in the macroeconomic data."

So which is it? Engineers burning $4m of tokens a month to ship features no company has ever shipped before? Or thousands of CEOs reporting no productivity gain at all?

The answer is both.

And the gap between those two things is where your career, company, and prospects get made or broken for the next 10 years.

Here's the equation every company now needs to optimize for:

Outcome = Tokens × Intelligent Operating Model

Where: Effective Operating Model = observability, autonomy, deployment speed, accountability.

Model this with whatever outcome you want — more leads, faster deploys, new products shipped, more uptime. Tokens are the fuel. The operating model is the organization you've built to burn them.

The Meta engineers burning $4m in tokens and the CEOs reporting no productivity gain have one primary distinction: They’re running different operating models.

One is built to convert tokens into features.

The other bought ChatGPT seats and called it transformation. AI adoption is alone is not effective. You don’t get productivity from buying seats like you did with SaaS. You need an effective operating model that produces a faster speed to outcome. Most companies don’t have this.

That explains the AI productivity gap.

AI adoption theatre is over.

The rest of this is an unpacking of how you get towards the right operating model.

It’s Dangerous to Wave away AI as Hype

Why change your operating model and pivot the whole company if AI is hype?

The AI skeptics make some great points:

AI is a circular economy. Nvidia funds OpenAI, OpenAI funds Microsoft, Microsoft funds Nvidia. Everyone sells to everyone else's supply chain.

The labs are unprofitable. The WSJ just published confidential financials from both labs ahead of their IPOs. OpenAI projects spending $121 billion on compute in 2028 alone, with losses of $85 billion that year. Break-even? After 2030. Maybe.

The gross margins are negative. On every dollar of silicon, one analyst estimates OpenAI makes roughly 68 cents in revenue. That's 32 cents underwater on inference and training alone, before paying a single engineer. (Anthropic, notably, clears the bar at around $1.70 per compute dollar.)

Which is why Anthropic is the one throttling first. They're the ones doing the math. And now having to play catch-up with recent deals with Amazon, Coreweve, Google, and even xAI for additional compute.

The throttled reasoning tokens on the Max plan are the first sign of negative margins starting to bite:

Anthropic started throttling reasoning token use on the $200-a-month Max plan — the "unlimited" tier — as a case study in what unlimited actually costs.

OpenAI's Nick Turley went on the BG2 Pod and said flat-rate AI pricing is like an unlimited electricity plan. It just doesn't make sense.

Ramp launched a product whose entire pitch is: your CFO cannot see where your AI spend is going. Across Ramp's customer base, average monthly AI token spend has gone up 13x in a year.

All of that is true. If you're reading the cash flow statement, you are reading it correctly.

But the economists piling on are making a bigger and more dangerous call. In the NBER study. Torsten Slok and Acemoglu's model shows 0.5% growth over a decade from AI. They're all saying the same thing: the average firm isn't getting anything out of AI.

And they're right about the average firm. And that’s a dangerous thing. The cash flow statement itself is not wrong. It is just looking at the fuel bill before the AI factory has been designed.

Demis Hassabis, co-founder of DeepMind and Nobel laureate for AlphaFold, said it cleanest:

AI is overhyped in the short term and drastically underestimated in the long term.

Skeptics will always sound smart, especially when grounded in backward-looking data. But if you look inside the companies getting the benefit from AI, it becomes clear that the long term belongs to a different population.

Tokenmaxxing is not the joke you think it is

Meta looks at a distance like it is speed-running its own unprofitability. They burnt roughly $80bn on VR and mostly gave up. They've committed to nearly 10GW of data center capacity and are tracking towards $100B in compute buildout. And then they hand all of that to 85,000 employees and have them compete on a leaderboard.

The top user burned 281 billion tokens in 30 days. That's roughly $4.2 million at Opus list prices, for one employee.

Clearly ridiculous, bubble-like behavior that is unsustainable (Meta has reportedly closed the leaderboard since its details leaked). But if you just revel in the ridiculous, you miss the signal.

Yet, input metrics are always ridiculous until the output metric catches up.

Cloud spend was a garbage metric in 2012. Now it's one of the most scrutinized line items in a public company's financials.

Revenue per employee was a garbage metric when capital was free. Now it's the ultimate filter for whether you've built a high-leverage platform.

Dollars per kilogram to orbit was a garbage metric when rockets were government programmes. Now it's how SpaceX runs the company.

Token spend will go the same way. Not as an end in itself. As the input that creates shipping velocity and revenue per employee. Tokens are the proxy — the visible, measurable thing you can put on a leaderboard that correlates, imperfectly, with engineers doing the thing.

If you can ship more code, and critically, more features to production, you can win users and new revenue. The canonical example is Anthropic. Anthropic shipped 120+ features in the first 90 days of 2026 — more than one a working day across Claude Code, Cowork, API, and models.

🛳 That’s a lot of product.

Anthropic is the cleanest example of the loop: more shipping creates more usage, more feedback, more revenue, and more permission to keep shipping. This is a feature velocity we've never seen before, and it is giving them a competitive advantage in the most competitive battle technology has ever seen.

Anthropic’s Cat Wu told the Lenny Podcast that Anthropic itself has gone from 6 months, to 1 month to between 1 week and 1 day for new product releases.

The velocity inside companies whose operating model is fully AI-enabled is INSANE.

Feature velocity is a power law. The companies that ship products faster grow their revenue faster, grow market cap faster, and attract investor dollars faster. Everyone else compounds in the opposite direction. The CEO of HubSpot has a name for the upside of this equation: OutcomeMaxxing. What is the maximum outcome you can generate for the tokens you put in?

But that forces us to ask a harder question: How do you know you're heading in the right direction? What's the equation inside your company that measures the inputs that create the outputs from AI token usage? Elon Musk famously measures dollars per kilogram to orbit.

Outcomes are lagging indicators. So the first management problem is observability: can you see which tokens became shipped work, and which tokens became slop?

How do you Measure an Intelligence Operating Model?

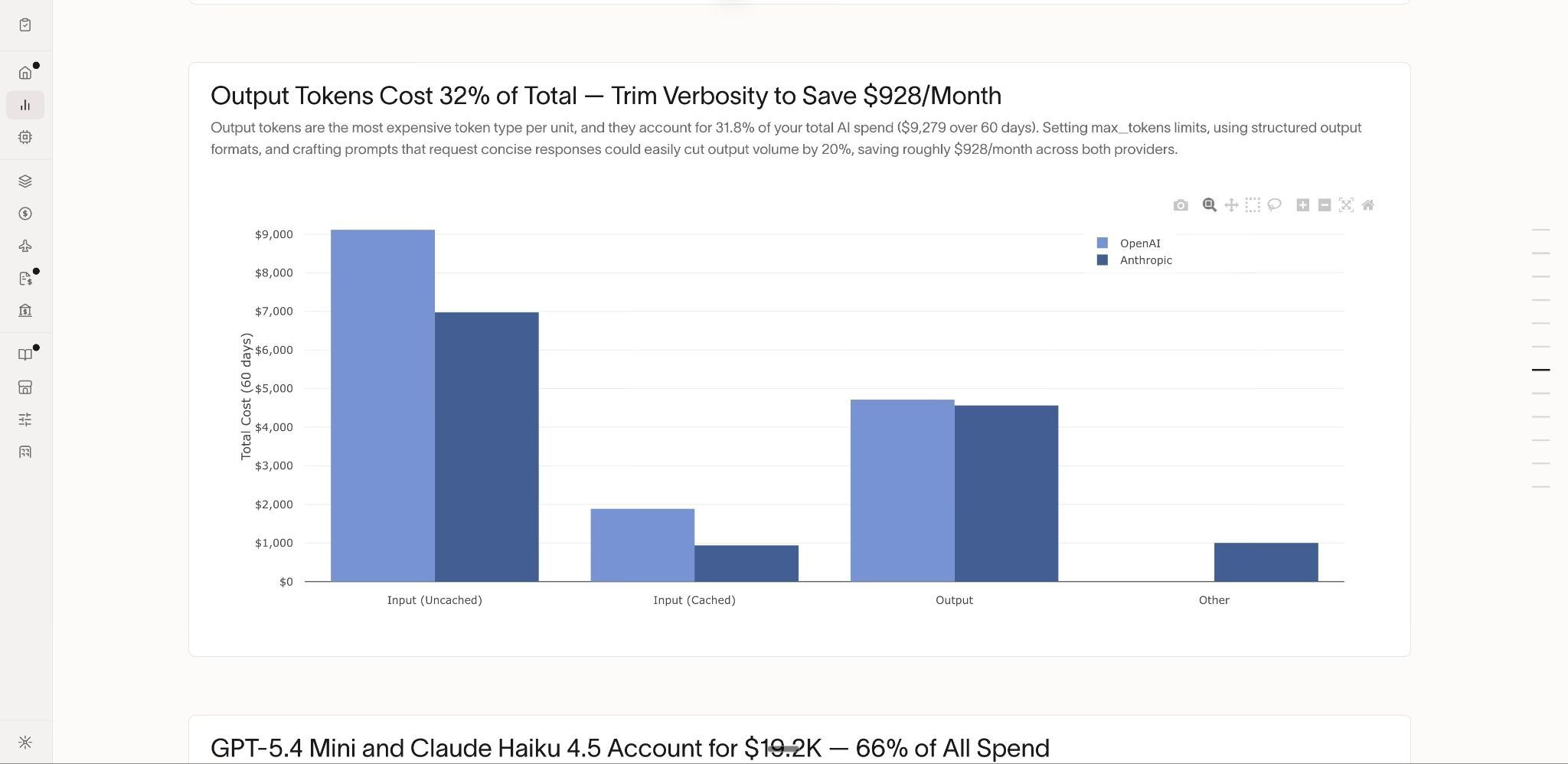

New data from Ramp shows costs are spiking across the industry:

Average monthly AI token spend across Ramp customers is up 13x in a year.

One in four months, the biggest AI spenders see costs jump 50% or more with no warning.

A single prompt template change can triple a bill overnight. A junior engineer experimenting on a Friday can burn through a quarterly budget by Monday.

Most companies haven’t figured out how to turn token use into effective outcomes. It’s just burn more and hope for the best.

(Obviously, some companies are quite sophisticated. But it’s clear that’s not universally true, far from it.)

And the best tell that this is a real problem is in the Ramp engineering team's own account of discovering a token-burning black hole in their stack:

It is 4:23 p.m. on a Tuesday in early spring, and a latency alert fires at Ramp HQ. The system is slowing, but every downstream dependency is within SLA, and the error rates are stable. So the team audits their own token volume and finds the culprit: a software package upgrade caused a Gemini model to generate phantom reasoning tokens by default.

Nobody asked for a chain of thought, but the model produced it anyway and billed for it.

Ramp says AI token overspend is much worse than SaaS sprawl — at least there you had a monthly bill to check. With AI tokens, what do you check? What’s the dashboard? So Ramp built what they needed: an ability to measure token usage directly, the same way you can measure and manage SaaS spend.

When you can see the token cost, you can cut it by 20% in an afternoon.

Two things are now true:

The teams spending more on AI (at least among Ramp customers) are growing faster, because it improves their shipping velocity.

Ungoverned token spend is unsustainable at current growth rates.

So the NBER study is right that most firms aren't getting productivity gains from AI. They're using it 1.5 hours a week. They bought ChatGPT seats and called it transformation. The BCG "AI brain fry" study found that workers using four or more AI tools actually report lower productivity than those using three or fewer.

Unmeasured AI tool use proliferation is a drag.

AI adoption is not effective AI adoption. In most cases, it is the opposite.

The thing observability gives you isn't just "cut waste." It's the ability to tell which teams are burning tokens productively and which are running agents in idle loops, and who’s just using ChatGPT to produce slop instead of doing the work.

Bad Metric | Better Metric |

Tokens burned per employee | Tokens burned per shipped feature |

% of AI adoption | Token cost per customer onboarded. |

“AI” mentions in earnings calls | Token cost per fraud case reviewed. |

Number of AI tools adopted | Token cost per dollar of incremental revenue. |

Tokenmaxxing for status is waste. Tokenmaxxing for shipping is the new moat. Freda Duan from Altimeter has a useful equation for why AI spend explodes:

AI spend = users × tasks/user × tokens/task × $/token

The first half is adoption. As more users perform more tasks and the company builds more agentic workflows. And as labs burn more tokens per task, multiplied by the cost of each token, AI spend increases. It’s the fastest-growing line item for most high-growth companies.

Are those tokens being burned worth it? What is the fundamental value they create? Here it would be lovely if we could just multiply the number of tasks completed by value per task:

AI value = tasks completed × value per task

But we all know quantifying the value of a task can be more art than science. Still, companies will begin to hunt for ROI from token spend, and it will change how vendors price too.

In fact, it has already started. Salesforce now sells Agentforce Flex Credits, with pricing that looks less like SaaS seats and more like paid units of work: credits × cases × users. IBM Bob has Bobcoins. Every vendor is inventing a currency for machine labor. Which looks to me like the market trying to price something SaaS never had to price directly: work done. Labor.

Tokens are only expensive if the task they complete is cheap.

Without observability, it’s impossible to know how many tokens were spent on what task. With observability, at least you’re grounded in data. Once you can see where the tokens go, you can ask the question Jack Dorsey asked.

What does a company rebuilt around AI token use look like? Look at Block.

In February, I wrote about Block cutting 40% of its staff and the stock ripping 24%. I asked whether it was AI-washing, genuine restructuring, or both. The answer has since filled in. Dorsey went on Sequoia's Long Strange Trip podcast in April and described the new ideal architecture of the company in a post-AI world:

"Today Block has maybe 5 layers of management between CEO and IC. The goal is to get that to 2 or 3 or ideally move to a world where there is no middle management."

Discourse and reactions are over-indexed on the cuts, and under-indexed on the implications of removing middle management as a coordination layer.

The human cost is real. The management lesson is also real.

Block is asking a question most large companies are avoiding: If AI can observe work, route information, summarize decisions, expose bottlenecks, and give every IC leverage, how many coordination layers does the company actually need?

Middle management exists to create visibility, route information, translate priorities, and escalate decisions. AI-native tooling starts to automate parts of that coordination layer. Once every engineer has tokens, every team has dashboards, and every decision has an audit trail, you need fewer people whose job is merely to know what is happening and pass it upwards.

That does not make layoffs good. It does make the question unavoidable.

The bubble can burst and the operating model can still win.

AI may still be a bubble.

The lab economics are not hand-wavy bad. They are mathematically violent.

The WSJ reported that OpenAI expects to spend $121 billion on compute in 2028 alone, producing an $85 billion loss that year, and does not expect to break even until after 2030.

The same reporting suggests the labs are now showing investors two versions of profitability: one that includes model training costs, and one that strips them out. Without training costs, the business can look almost profitable. With training costs, the furnace is still roaring. OpenAI is also reportedly facing concerns about whether revenue can rise fast enough to cover more than $600 billion of long-term compute contracts.

That all sounds like a bubble, and these companies need to IPO. What happens after could be messy. Bubbles do happen, and when they do, they destroy capital. They do not un-invent operating models.

The dot-com bubble wiped out trillions. It did not stop every company becoming an internet company. Cloud still happened, e-commerce still happened. AI has the potential to be 10x larger than dot com and possibly more.

If AI corrects hard, the weakest token burners get exposed first. The companies buying seats and calling it a transformation will cut budgets, freeze pilots, and declare the whole thing over.

The companies that learned how to turn tokens into outcomes will keep going.

Tokens are calories for the AI firm. Some companies are eating more and getting slower. More tools. More subscriptions. More agents are looping in the background. More slop. Some companies are metabolizing those calories into muscle with faster product cycles, tighter feedback loops, and higher revenue per employee.

You should be asking yourself how your company and career change in a world where:

Middle management gets replaced.

Every company has to measure tokens.

Those who measure tokens effectively compound their advantage.

Those who don't watch their multiples implode (like SaaS) and become zombies.

Software moves from eating the world to eating labor.

How do you and your company get the ability to go from idea to in-production to iterated-in-production faster than your competitor? AI compresses the time toward zero.

Your Moat In the AI Economy is Speed to Outcome

The NBER study found 90% of firms report no productivity impact from AI over the past three years. Ramp found the top quartile of AI spenders on its platform more than doubled revenue since 2023.

Both are true.

One is the average firm.

The other is the firm rebuilding itself around token-to-outcome conversion.

The difference is whether anyone in the building can answer one question:

What outcome did the tokens buy?

If someone can, you have an operating model.

If nobody can, you have a subscription.

ST.

—-

This is part one of The Token Economy — a series on the operating model that survives the next decade.

4 Fintech Companies 💸

1. GenFi - Stablecoins-as-a-Service

GenFi lets any company issue accounts, create virtual accounts on behalf of users to launch FX, pay in and payout solutions with tokenized money with a single API. The API handles KYB / KYC, minting tokens, redeeming and settling with multiple stablecoin issuers.

🧠 This is the pain that Bridge, BVNK, etc. fix. There’s a lot of hidden integration pain for PSPs and Neobanks. If you’re trying to offer USDC, USDT and tokenized T-bills you could face three separate KYB processes with each issuer. Now if (as their website says) they’ve made this work in multiple jurisdictions, then that’s a wedge. But umm, I’m not sure how mature this is as an offering (at least, judging by their FAQs where the answer to every question is the same)

2. XFX - Stablecoin-to-fiat FX for LatAm

XFX lets businesses exchange stablecoins for Latin American fiat currencies — starting with the US dollar, Mexican peso, and Colombian peso. Founded by three ex-Bitso employees who grew frustrated with how hard it was to move between stablecoins and local currencies, XFX handles the FX settlement so payments companies and fintechs don't have to build trading desk relationships themselves.

🧠 The founders built this because they lived the pain at Bitso. They're hiring "quants" and building trading desk relationships, not just an API wrapper. This is a liquidity business, not a SaaS. Very similar in feel to OpenFX.

3. Seapoint - The AI finance back office for Europe

Seapoint is an AI-native finance platform that lets startup founders connect their banks, email and accounting tools and automates reporting, bookkeeping, expense management and payroll from day one. It also provides multi-currency accounts, payments, cards and treasury. Founded by Stripe's former European CIO, more than half the team are Stripe alumni.

🧠 Europe needs these kinds of startups. You see plenty of these in the US. And every time I see this as a Brit, I’m thinking, yes, I want this! Connecting email is super interesting; the automation of receiving an invoice is something AI makes just work in a way it very rarely did before.

4. Comfi - B2B Buy Now Pay Later for MENA

Comfi lets SME suppliers in the Middle East offer up to 90-day payment terms to their business customers while getting paid within 24 hours. The platform embeds financing directly into B2B transactions using AI-driven underwriting, replacing the manual credit approvals and stretched payment cycles that trap working capital across the region.

🧠 B2B BNPL has been well-explored in Europe and the US (Billie, Hokodo, Resolve), but MENA is structurally different: SMEs account for the majority of GDP, invoice financing is thin, and traditional banks barely touch them. $65M at pre-Series A for a MENA B2B fintech is a statement. 15,000 invoices processed across 1,000 clients suggests real traction, not vaporware.

Things to know 👀

Claude can now help build pitchbooks, review earnings transcripts, build financial models from data feeds, reconcile data against a GL, and audit statements. The big one that caught my attention was “KYC screener,” which will “assemble entity files, review documents and packages escalations for compliance review.

🧠 This appears very competitive to Hebbia, one of Fintech x AI’s big winners. Used by hedge funds, private credit firms, and equity research, they’ve specialized in taking multiple models and making them work efficiently for those use cases.

🧠 Specialists will always outperform the base model if they assume base models will always get better. As an analogy, look at Cursor, since Codex and Claude Code launched, their revenue has increased. Because they benefit as AI gets better and adds value.

🧠 This isn’t really doing “KYC” for consumers. It’s preparing the docs for a specific use case. At Sardine, we saw LLMs throw a ton of false positives when used for fraud, unless you build very complex agentic loops around them. It’s this kind of scaffolding that requires problem domain knowledge, which still matters.

🧠 That said, there are countless companies that are not Hebbia or Sardine clients that could use these tools. Anthropic as a baseline provider of capabilities makes sense.

🧠 I’ve also heard the labs tell me that finance is their fastest-growing enterprise segment. So no wonder they’re pushing out all of these features. It’s counterintuitive but interesting that fintech, far from being dead, and incumbents, far from being disrupted, are some of the fastest adopters of AI. Speaking of which…

Anthropic's Forward Deployed Engineers (FDE) helped build a new AML agent that can help craft SAR narratives. Initially partnering with BMO and Amalgamated Bank to compress investigations from days to minutes. This product will be generally available in H2 2026. Anthropic's forward-deployed engineers are embedded inside FIS to co-build, then transfer the playbook so FIS can ship more agents independently. The repeatable IP is a deployment pattern, how a frontier model reasons over the most sensitive data without that data ever leaving the bank's trust perimeter. The roadmap includes credit decisioning, deposit retention, onboarding, and fraud. Client data stays inside FIS-controlled infrastructure, and every agent decision is traceable and auditable

🧠 Distribution wins. FIS powers “~12% of the global economy.” They do the core systems, payment rails, and deposit and lending systems of record. You can't cold-call your way into that.

🧠 The new AI is basically swallowing the "Value Added Services" people built around FIS. Smart. Because it's not cannibalizing existing FIS revenue (but maybe their partners who do risk AI today).

🧠 Anthropic now has FDEs (yes, the Palantir model) sitting inside FIS, the company that runs core banking. This came days after Customers Bank announced OpenAI would have FDE's on site, with a goal of reducing cost/income from 49% to 40%.

🧠 How will regulators react to "Forward Deployed" engineers inside the banks under Third Party Risk Management (TPRM)? What if one of the engineers or models Yolo deletes a code base (like happened to PocketOS recently?). Also does FIS now get questions about Anthropic during examinations because it is subject to the Bank Service Company Act (BSCA)?

🧠 The FIS story is one I think regulators will buy. FIS has a contract and the central data, and system of record for banks. For a long time just saying “FIS does it” has been a check in the box with regulators (for better or worse). FIS answers the big model risk governance worry.

Aside: At Fintech Nerdcon this year, we’re doing a 2-day workshop on how model risk works with foundation models, and risks like PocketOS code deletion. We’re building a Tech Sprint in partnership with AIR. Grab yourself a ticket if you want to get involved.

🧠 This model works for any sector: This is a fantastic ways for systems of record, incumbents to use their moat and partner with AI companies to drive revenue growth

🧠 Why wouldn't Anthropic buy a stake on FIS and a system of record company in every vertical? Prove the FDE model works, then push it out to every other company in the vertical? They could also buy up one or two providers in the value added services space with specialist IP and talent to scale that.

🧠 Using FDE’s from one of the labs means you’re somewhat locked into their models (at least for an exclusivity period). You could do this all on your own, modified or custom-trained foundation models. Then you own the IP. But do you have the talent to pull that off or do you partners?

🧠 Where is Google in this FDE picture or Microsoft? Google arguably has the least right to win in enterprise, but has the models, scale, and compute. Microsoft has the clients but not the model.

Quick hits:

🥊 Chime hit GAAP profitability (18% Adj) with 25% YoY revenue growth. They’re nearly a rule of 40 growth company valued at 3x earnings with 10.2m members. That’s a lending valuation for a business that’s growing rule of 40, and gets the vast majority of its revenue from payments and new platform products. Not investment advice, but, seems out of bound?

🥊 Erebor hit $1bn deposits in 7 weeks. SoFi took 3 years, Mercury took 4, and Chime took 6. The distinction here is that these are corporate deposits from companies that were either deplatformed (defense, crypto), or wanted an API-first bank that was national, not regional. Wholesale deposits are considered much riskier than consumer deposits (run off between 25 to 100% vs 5%). And this was a lot of what made SVB so risky when the deposit flight happened. Erebor is structurally more defensive. By paying near-market rates (SOFR-50) on all accounts, they aim to neutralize the "yield-chasing" incentive that usually triggers a bank run. They aren't avoiding lending; they’re just seven weeks old and prioritizing a strong balance sheet.

🥊 FinCEN proposed rule to reform AML/CTF to focus more on higher risk activity. FinCEN wants to move away from the “checkbox process” of AML, focusing more on effectiveness, and regulators would have to consult with Treasury before any enforcement action took place. I mean, in principle, this sounds amazing. We know most AML is theatre. Can we actually make it about effectiveness? I hope so but we’ve been talking about this forever. One thing that’s really clear, auditors and examiners subjective judgement is becoming less sacrosanct. And, when an auditor tells you, you can’t use machine learning, in the year of our lord 2026, that’s long, long overdue (true story from an industry friend).

Good Reads 📚

Goose is Block's answer to Claude Code. A custom harness, that is wired directly into Square and Cash App, which gives its new AI tools Moneybot and Managerbot secure, obseravble ways to move real money, see inventory and run payroll. It uses background signals like a move in a stock price, or a spike in sales to queue a human-in-the-loop solution. The idea is that you can click "resolve" before you knew there was a problem. Goose actually pre-dates Claude code.

Alica, the UK digital bank, one of the fastest growing companies in Europe, and the most understated success story in finance, published how they use AI, and true to form, it’s zero waffle, insight dense. They built a skills library for the entire team, updated their design system for agentic coding, allowing them to make new pages 89% faster, and have increased the number of pull requests merged to production by 3.8x YoY.

Tweets of the week 🕊

That's all, folks. 👋

Remember, if you're enjoying this content, please do tell all your fintech friends to check it out and hit the subscribe button :)

Want more? I also run the Tokenized podcast and newsletter.

(1) All content and views expressed here are the authors' personal opinions and do not reflect the views of any of their employers or employees.

(2) All companies or assets mentioned by the author in which the author has a personal and/or financial interest are denoted with a *. None of the above constitutes investment advice, and you should seek independent advice before making any investment decisions.

(3) Any companies mentioned are top of mind and used for illustrative purposes only.

(4) A team of researchers has not rigorously fact-checked this. Please don't take it as gospel—strong opinions weakly held

(5) Citations may be missing, and I’ve done my best to cite, but I will always aim to update and correct the live version where possible. If I cited you and got the referencing wrong, please reach out